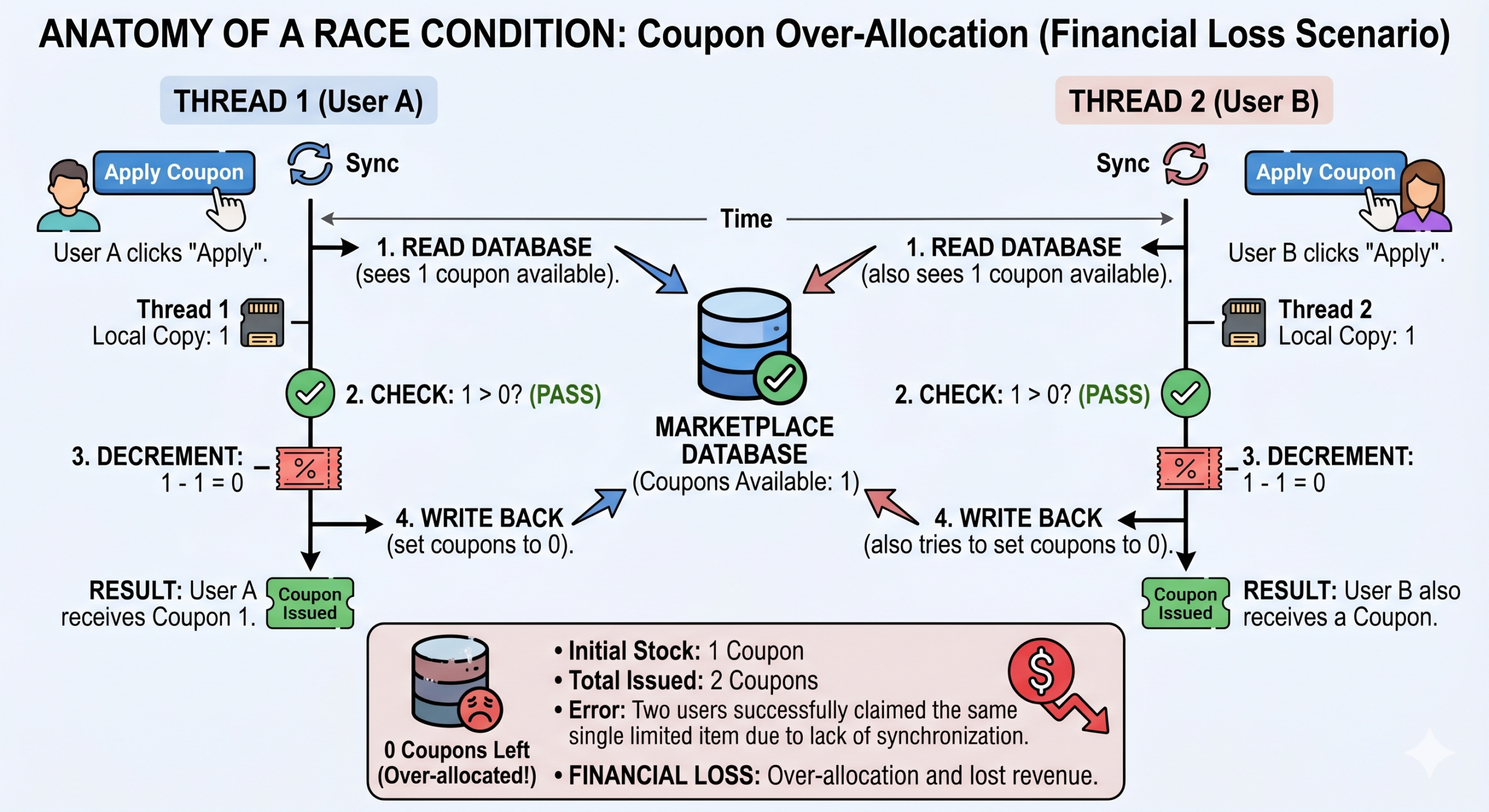

1. The Core Problem: How Does a Race Condition Occur?

Imagine you have exactly 50 discount coupons left in your database. When a user clicks “Apply,” the backend server typically executes code in three steps:

-

Read: Check the database for remaining coupons. (e.g.,

Coupons = 50) -

Check: Are the remaining coupons > 0? If yes, proceed.

-

Update: Subtract 1 from the total and grant the discount. (e.g.,

Coupons = 49)

High Traffic Scenario (Without Synchronization):

Suddenly, 500 users click “Apply” at the exact same millisecond.

-

Your server spins up separate threads (or processes) for all these requests simultaneously.

-

Thread 1 reads the database:

Coupons = 50. It moves to step 2. -

In that exact same nanosecond, Thread 2, Thread 3, and 70 other threads also read the database. They all read

Coupons = 50! -

All of them pass the check (

50 > 0) and proceed to update the database (50 - 1 = 49). -

The Result: 70 people get the discount, but the database count might show 49, or worse, it might decrement into negative numbers (e.g.,

-20). This is a Race Condition, and it results in a financial loss for the company.

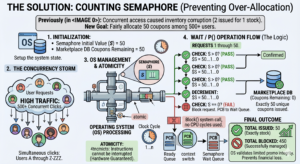

2. The Solution: How Does a Counting Semaphore Work?

The Operating System provides a concept called a Semaphore to solve this. At a basic level, a Semaphore is a special integer variable managed by the OS. The key feature of a Semaphore is that operations performed on it are “Atomic” (they cannot be interrupted halfway through).

For this scenario, we use a Counting Semaphore.

-

Initialization: We initialize the Semaphore (let’s call it $S$) to 50. ($S = 50$).

-

The Wait / P() Operation: Before any request can process the discount, it must pass through the Semaphore.

-

The rule of the Semaphore is:

-

If $S > 0$, I will immediately decrement it by 1 and allow the process to continue.

-

If $S == 0$, I will block (suspend) this process and return an error (“Coupons exhausted!”).

-

High Traffic Scenario (With Semaphore):

When 500 users click simultaneously:

-

The OS forces them into a queue.

-

The Semaphore processes them sequentially (one after another, in microseconds).

-

The first 50 requests will take the Semaphore from $S = 50$ down to $S = 0$.

-

When the 51st user’s request arrives, the Semaphore sees $S == 0$. It blocks that request, preventing it from executing the discount code.

-

The Result: Exactly 50 people get the discount—not a single person more.

Our portfolio Makeuser

3. OS Kernel Level: Wait Queues and PCBs (Process Control Blocks)

At an advanced/architectural level, a Semaphore is not just an integer. Inside the OS Kernel, it is a Data Structure (Struct) containing two things:

-

An integer value (the count).

-

A pointer to a Wait Queue (a Linked List of blocked processes).

When $S == 0$ and the 51st user requests a coupon, the OS doesn’t just make it “wait” in a loop. It does the following:

-

The OS takes the PCB (Process Control Block) of that specific thread. (The PCB stores the current state, memory limits, and CPU registers of a process).

-

The OS moves this PCB out of the CPU’s “Ready Queue” and puts it into the Semaphore’s Wait Queue.

-

The OS then executes a

block()system call. The thread goes to sleep and consumes absolutely zero CPU cycles. -

(In a Mutex scenario, when another process releases a lock via a

signalorV()operation, the OS uses awakeup()system call to bring a waiting process back to the CPU. This swap is called a Context Switch).

4. Hardware Level: CPU Atomicity (The Root Cause)

A major question arises: If 2 threads try to subtract 1 from the Semaphore at the exact same time, why doesn’t the Semaphore itself suffer from a race condition?

Because the Semaphore is protected by the Hardware (CPU) itself. OS developers rely on special CPU instructions (built into architectures like x86 or ARM):

-

Test-and-Set Lock (TSL): A CPU instruction that performs a “Read and Write” in one single, uninterruptible clock cycle.

-

Compare-And-Swap (CAS): Used in modern processors (where your Node.js or PHP servers run). It tells the CPU: “Only change this memory value to $Y$ if it is currently exactly $X$; otherwise, fail.”

Ultimately, software-level locks exist because the silicon hardware guarantees atomic operations.

5. Advanced OS Problems: Priority Inversion

When using locks (like Mutexes or Semaphores initialized to 1), a dangerous situation called Priority Inversion can occur.

-

The Problem: Suppose you have High ($H$), Medium ($M$), and Low ($L$) priority processes.

-

$L$ acquires the lock. $H$ needs the lock, so it gets blocked and goes into the Wait Queue.

-

Suddenly, $M$ becomes ready to run. Because $M$ has a higher priority than $L$, the OS scheduler preempts (pauses) $L$ and gives the CPU to $M$.

-

Now, $L$ cannot release the lock because it doesn’t have the CPU. And $H$ (the most critical process) is stuck waiting forever.

-

The Solution: Operating Systems use the Priority Inheritance Protocol. The OS temporarily boosts $L$‘s priority to match $H$‘s, ensuring $L$ finishes its task quickly, releases the lock, and allows $H$ to run.

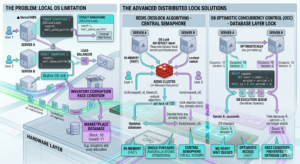

6. Modern System Architecture: Distributed Semaphores

As a Full-Stack developer, you must know that OS-level Semaphores only protect you if your backend is running on a single server.

If your marketplace scales and you are using Load Balancing across 3 different servers (e.g., on AWS or Vercel), an OS-level lock on Server A cannot stop a thread running on Server B!

The Advanced Solution:

In modern web architecture, we move the synchronization out of the OS and into the database or caching layer using Distributed Locks:

-

Redis (Redlock Algorithm): We use an in-memory datastore like Redis as the central Semaphore. All 3 servers must ask Redis for the lock before touching the database. Because Redis is single-threaded, it naturally processes requests atomically.

-

Optimistic Concurrency Control (OCC) in DB: We add a

versioncolumn to our database row. The SQL query becomes:UPDATE coupons SET count = count - 1, version = version + 1 WHERE id = 1 AND version = 5;If two servers try to update simultaneously, the first one changes the version to 6. The second server’s query will safely fail because

version = 5no longer exists, preventing the race condition without requiring heavy wait queues.